Then a new sound fills the room. Crip. Johan turns again, and this time his eye is caught by a stuffed bear in a clear, lighted box mounted on the far wall. In a few seconds the box goes dark, and the first sound starts playing again. Tack. Tack. Johan goes back to the frog. But soon that other word reverberates through the room again: Crip. The change makes Johan turn, and again a clear box on the wall lights up, this time revealing a pink rabbit holding a drum. The box soon goes dark, and again Johan busies himself with the frog. He doesn't know it, but he has just learned two new words.

Imagine studying a new language without the help of a translator. At first it's nothing more than a stream of babble. Somehow, though, babies begin to pick individual sounds out of the stream, distinguishing where one word ends and another begins. By four months they can recognize their names; by the time they utter their first words, around the age of twelve months, they understand hundreds more.

How do these helpless, tiny creatures accomplish such a monumental task? Infant-language researchers believe babies are born with a genetic aptitude for language, one that emerges over time. Shortly after birth, infants can discriminate among a large number of sounds that occur in many languages, but they gradually develop a preference for the sounds of their native tongue. Similarly, in the second half of their first year, babies start paying less attention to subtle differences in pronunciation and more attention to whole words and phrases. The rhythms of speech become attractive, helping them remember words they hear frequently. So, while on the outside babies appear weak, barely able to sit up or crawl, on the inside their brains are working overtime. Making connections between sounds, listening for familiar phrases, building a vocabulary: even at this age, babies are slowly but surely fitting pieces into the unique and complicated puzzle that makes them who they are.

This picture is in stark contrast to the long-held belief that newborns are empty vessels waiting for their environment to fill them with words. Conventional wisdom began to change in the 1960s, when Massachusetts Institute of Technology linguist Noam Chomsky introduced his controversial nature-over-nurture theory. Chomsky argued that the sing-songy baby talk adults adopt when they speak to infants - now known as Parentese - is far too garbled to be useful in teaching language. Linguistic knowledge is entirely innate, he maintained, part of our genetic programming that unfolds over time according to a biological schedule.

Other researchers disagreed, arguing that social interaction also plays a large part in shaping a baby's language acquisition. Today the hypotheses of most experts, including James Morgan, associate professor of cognitive and linguistic sciences, fall between the two extremes of nature and nurture. "Even though babies are pretty powerful computational machines," Morgan says, "they still need data to work on."

Morgan has found that by nine months babies have begun to distinguish specific sequences of sounds; by ten months they are focusing on whole words.

More intriguing than the nature-versus-nurture debate, though, is the question of how babies process that data. Morgan is part of a small but growing group of psychologists who study infant speech perception, trying to determine what it is that babies hear in conversation and how they organize that information into meaningful words and phrases. It was, Morgan says, a landmark 1971 study by cognitive science professor emeritus Peter Eimas, psychology professor Einar Siqueland, and students Peter Jusczyk '70 and James Vigorito '71 that gave birth to the scientific study of how babies perceive language. Their finding - that babies could distinguish two different speech sounds in the same manner as adults - made other researchers stop and think. While spoken-language acquisition had been studied in young children since the 1930s, suddenly, after the Brown study, there was a more complex question to investigate: what are babies hearing and thinking before they begin to speak?

At the time, infant speech perception couldn't have been further from Jim Morgan's mind. In the mid-1970s, he dropped out of Washington University in St. Louis to move to Israel and work on a kibbutz. While learning Hebrew, he became fascinated with the nuances of pronunciation and word recognition, subtleties he'd never thought to notice in his own language. After returning home and graduating from college, Morgan pursued his newfound interest by earning a doctorate in linguistics and psychology, which he began at the University of California, San Diego, and completed at the University of Illinois. A professor at the University of California steered Morgan toward studying language learning in children, research he continued as a faculty member at the University of Minnesota. In 1989, he moved to Brown, where, in a couple of cinder block rooms in the basement of Metcalf Hall, he now tests 500 to 600 babies each year.

Tack. Tack. Tack. Crip. What Johan cannot see as he listens to these words and plays with the toy frog is Nancy Burt Allard, who manages the Metcalf Infant Research Lab. Allard is just down the hall, sitting in another tiny room in front of a computer and a television monitor that displays Johan's image. The computer is programmed to pipe strings of vocalized words into a speaker in the room where Johan is sitting. Allard - and Morgan, when he takes his turn in the lab - controls the timing of each word string. Allard also uses a microphone to communicate through headphones with Heather Bortfeld, the woman with the toy frog - actually a postdoctoral researcher working with Morgan.

A friendly man with dark, wavy hair and a beard touched with gray, Morgan has lately been investigating how babies pick familiar words out of fluent speech - more specifically, what characteristics of words and sentences make them easier or harder to recognize. He and his assistants are observing seven-, ten-, and thirteen-month-olds to see when, and over what length of time, this ability develops. The babies aren't always reliable research subjects - a missed nap or an empty stomach can spell disaster - but their responses during Morgan's experiments can provide a remarkable glimpse into how their brains deal with language.

The words Morgan is using for this experiment - tack and crip - have no special meaning; the idea is that Johan will turn his head toward the speaker when he hears a new word after several repetitions of the first word. When he does turn his head, Allard rewards him by remotely activating one of two "reinforcers," the clear boxes on the wall that light up to display the stuffed bear and the Energizer Bunny-like toy. To keep Johan from fixating on the reinforcers and turning his head too much, Bortfeld places small toys, such as the frog, on the table for him to look at and touch. "It's all a balancing act," says Allard.

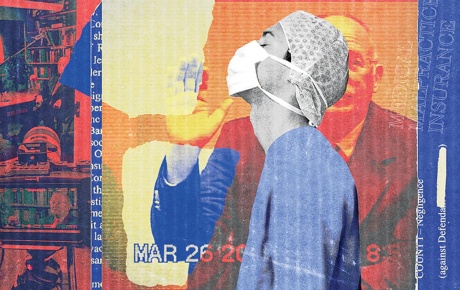

Associate Professor of Cognitive and Linguistic Sciences James Morgan first became fascinated with the subtleties of language while learning Hebrew on an Israeli kibbutz.

The experiment is divided into two phases. The first, called "shaping," is a kind of warm-up for Johan. When the word he's listening to changes - from tack to crip or vice versa - Allard's computer increases the volume so he'll be more likely to notice and turn his head. Gradually the sound goes back down, and the second phase of the experiment begins when all the words are played at the same volume. By removing a change in volume as a cue, Allard is able to assess more accurately when Johan is truly recognizing a new word.

If Johan continues to turn his head at the appropriate times, he'll get asked back for a second, more complicated session. At that time, Allard will play a recording of a brief story with sentences containing the words tack and crip, as well as other similar-sounding words, with the hope that the boy will turn his head when he hears them in the story. As in the training session, there will be dozens of variables: how loudly the words are played over the speaker, whether the reinforcers are moved or kept immobile, what toys are placed on the table.

Morgan's work confirms what many parents have long suspected: babies who hear lots of words will understand more words and perhaps begin to speak sooner.

The biggest variables are the infants themselves. Sometimes older babies get too distracted playing with toys on the table to listen to the speech sounds; younger babies occasionally find a research assistant's face more interesting than any of the other stimuli. "Methodologically, it's tough," says Morgan. "We're obviously confined to behavioral responses. We can't ask thirteen-month-olds what they're hearing."

Despite the difficulties, Morgan has managed to shed some light on how babies process words. Over the past few years he has found that the rhythm of syllables helps infants learn to segment speech, or perceive when one word ends and another begins. Other researchers, including Peter Jusczyk, now a professor at Johns Hopkins University, have shown that rhythm plays a large part in sing-songy Parentese. But Morgan's investigations dig much deeper. He has shown that between the ages of six and nine months, babies whose native language is English seem to develop a particular rhythmic bias as they listen to people talk. They're more apt to notice trochaic syllable patterns, in which the first syllable gets the stronger emphasis, than iambic patterns, in which the second syllable is emphasized - rabbit, for example, instead of raccoon.

Morgan has also observed that babies, during the same six-to-nine-month age period, begin to distinguish specific sequences of sounds. Say "Dakota" several times to a six-month-old, then change the order of the syllables to "ko-da-ta," and he won't notice any difference. A nine-month-old will.

As they grow, babies instinctively regulate their speech-perception abilities. From birth to seven or eight months, they can discern subtle differences in pronunciation, such as the "t" sounds in stop and butter. After ten months, they no longer seem to notice that contrast. They've shifted their focus to a higher, more abstract level of thinking: whole words. "Babies are creatures suspended in oceans of information," says Morgan. "They survive - and advance - by not attending to all of it."

What all this points to is: experience matters. Even though most infants are born with a sophisticated neurological capacity for speech, babies who hear lots of words will understand more words and perhaps begin to speak sooner. Chomsky's theory that language is inborn is valid, in Morgan's opinion, to a point. But most of the research on what parents say to their babies has been based on transcriptions, and "there's a tremendous amount of information - rhythm, intonation - that's filtered out when words go on paper," Morgan says.

It is far more valuable, Morgan believes, to study babies directly, using highly controlled experiments. Methods and stimuli used to test the babies are constantly refined, and results aren't considered accurate until they're replicated by other researchers. But the thought processes of babies are tricky to quantify; they can't be tracked and measured like motor skills. Even the most controlled infant-speech-perception experiments conducted today can only describe some limits of babies' abilities as they grow. That leaves scholars with "islands of understanding," says Morgan, "but no bridges in between." Yet as those islands come together, child-development experts will be increasingly able to identify babies whose hearing or comprehension skills may not be developing properly - problems that usually don't surface until after a child is old enough to speak. The bottom line for now? Babies know a lot more about language than anyone ever thought.

Researchers may one day be able to identify earlier those babies whose hearing or comprehension skills are not developing properly.

Johan is living proof. During the "tack/crip" training session, he is alert and active, grabbing at the toys on the table and turning his head quickly at almost all the correct times. Concluding the trial, Allard guesses that he lives in a bustling household with lots of talking, though she won't speculate whether the talking is directed at him during play or takes place in conversations that go on around him or on television. Those kinds of variables, along with more basic ones such as gender, race, and socioeconomic status, have yet to be explored in infant speech perception. Do they even matter? It's hard to say, Morgan says. Living in a rich and varied environment is what makes the difference. "The larger the database," he explains, "the more quickly a baby can progress."

Older children and adults studying a new language aren't that different. Living in a country where that language is spoken, hearing it constantly, is a far more effective teaching tool than the fanciest dictionaries, audiotapes, or CD-ROMs. New parents, it seems, don't need to fill their living rooms with special baby toys. Simply talking to infants face-to-face provides all the linguistic training they need.

Words, facial expressions, rhythm, pitch - all the signals of speech bounce around in a baby's brain, setting in motion an epic mental journey. It's the initial leg of this journey that fascinates Morgan, the mysterious phase that ends at another beginning: a toddler's first spoken words. These words, and the thousands that follow, are what set us apart from other animals. To explore where they come from is to search for the essence of humanity.